Essential Best Practices for Data Platform Engineering Success

Introduction

The landscape of data platform engineering is evolving rapidly, driven by an increasing reliance on data for strategic decision-making across various industries. As organizations strive to leverage their data effectively, the role of a data platform engineer becomes crucial. This role encompasses a range of responsibilities, from designing robust architectures to ensuring compliance with stringent regulations.

However, the rapid advancements in technology and the complexities of data management raise a critical question: how can organizations adopt the best practices necessary for success? This article explores essential strategies that not only enhance data platform engineering but also position organizations to thrive in a data-driven future.

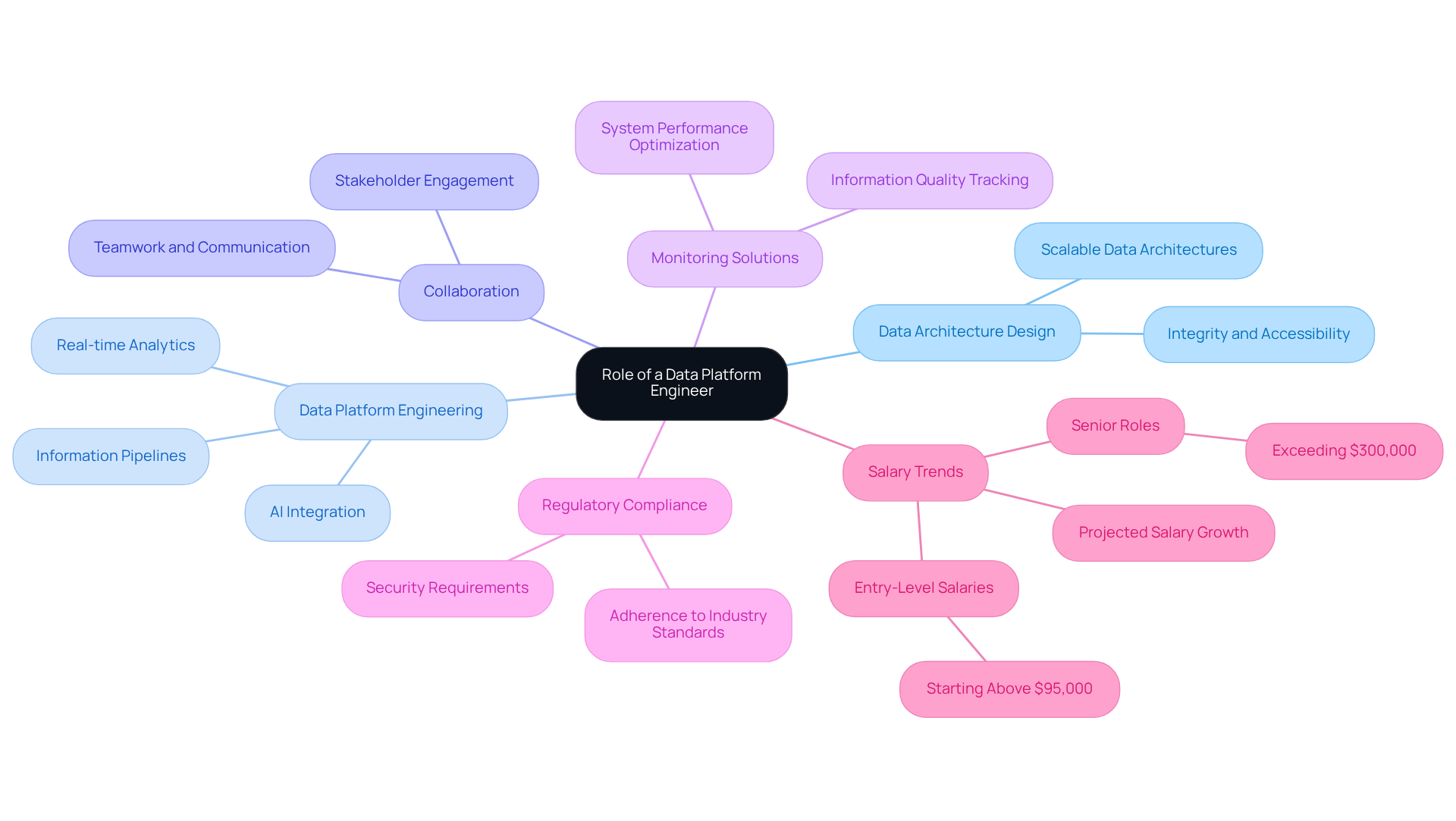

Define the Role of a Data Platform Engineer

A Platform Engineer plays a crucial role in data platform engineering by designing, constructing, and sustaining the infrastructure that supports information processing, storage, and analysis. This multifaceted position encompasses several key responsibilities:

- Data Architecture Design: Engineers create scalable and efficient data architectures capable of managing large data volumes while ensuring integrity and accessibility. As organizations increasingly rely on data-driven decision-making, the demand for robust data platform engineering is paramount.

- Data Platform Engineering: They engage in data platform engineering to construct and enhance information pipelines that enable seamless flow from various sources to storage and analytical tools, which is essential for real-time analytics and AI integration.

Collaboration with stakeholders is essential in data platform engineering, as it requires close cooperation with data scientists, analysts, and business stakeholders to understand information needs and ensure the platform aligns with organizational requirements. At Neutech, we prioritize hiring engineers who excel in communication and teamwork, ensuring that our clients receive not only technical expertise but also the intangibles that foster effective collaboration.

In data platform engineering, implementing monitoring solutions to track information quality and system performance is vital. Engineers make necessary adjustments in data platform engineering to optimize operations, ensuring reliability in information delivery. Neutech’s commitment to reliability is reflected in our high employee retention rates, allowing us to provide consistent and dependable engineering talent.

In regulated sectors like finance and healthcare, data platform engineering is critical for adherence to industry regulations and security standards. Data platform engineering ensures that information handling practices meet these stringent requirements. Neutech specializes in providing engineering talent in data platform engineering that understands the complexities of regulated industries, offering flexible scaling options to meet evolving client needs.

The typical salary for Platform Engineers in financial services and healthcare is expected to increase substantially in 2026, with entry-level salaries beginning above $95,000 and senior positions surpassing $300,000. This trend indicates the growing demand for skilled professionals in these fields. Industry leaders stress that effective information architecture design is not merely a technical necessity but a strategic advantage in data platform engineering, highlighting the significance of this role in driving successful initiatives. By clearly outlining these responsibilities, organizations can improve their recruitment and retention strategies for skilled engineers in data platform engineering, ensuring they are well-prepared to advance information initiatives.

Design Robust Data Architecture

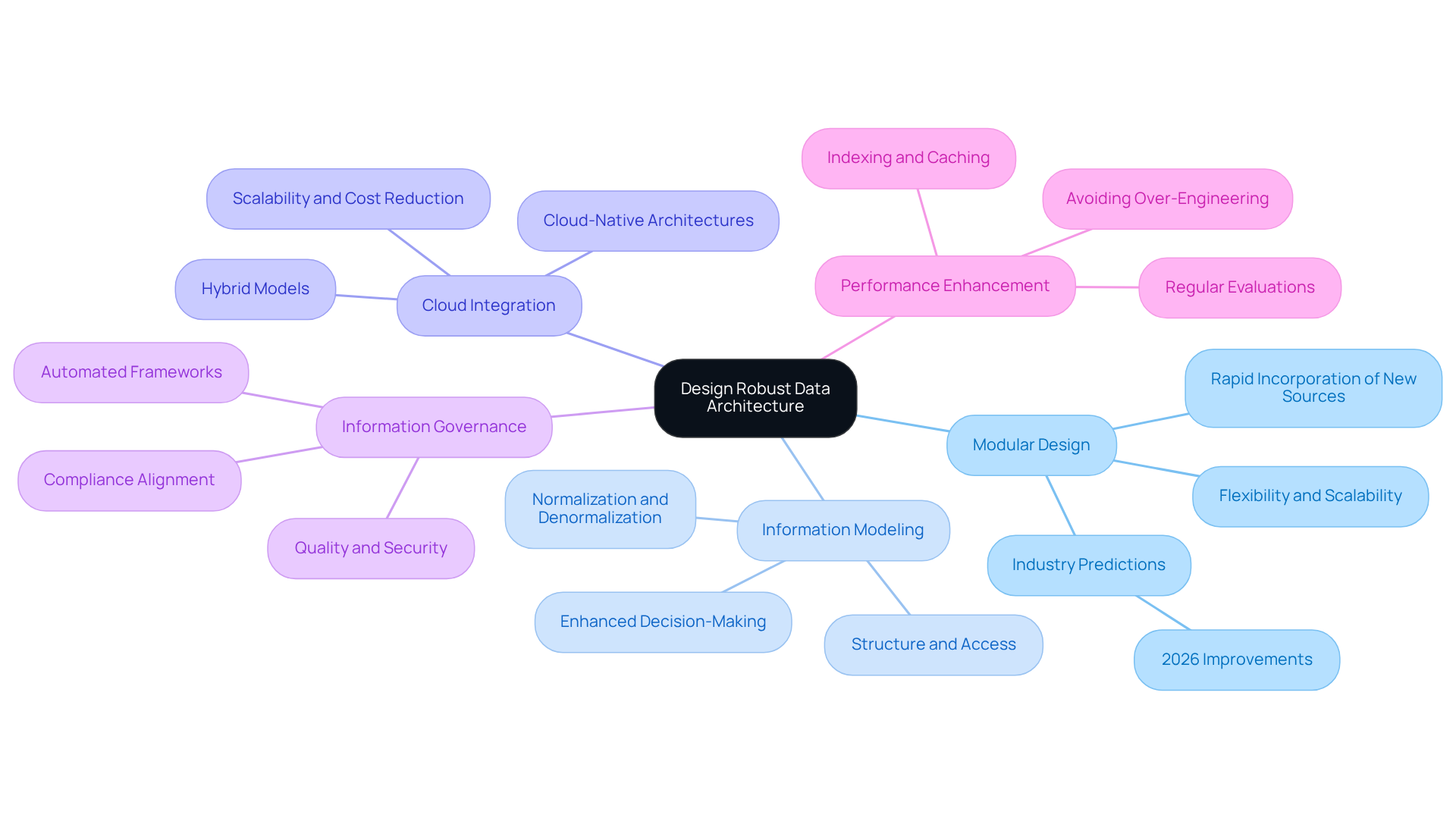

To design a robust data architecture, consider the following best practices:

-

Modular Design: Implementing a modular architecture is crucial for flexibility and scalability. This approach enables teams to adjust to changing information requirements without necessitating a complete system overhaul. Financial services companies, for instance, can benefit from modular designs that facilitate the swift incorporation of new information sources and adherence to regulatory changes. Industry predictions indicate that by 2026, organizations adopting modular architectures will experience significant improvements in their ability to respond to market changes and regulatory requirements.

-

Information Modeling: Effective information modeling techniques are essential for defining how information is structured, stored, and accessed. Utilizing normalization and denormalization techniques can enhance performance, ensuring that information retrieval is efficient and meets the demands of real-time analytics. Recent case studies emphasize that entities prioritizing strong information modeling experience improved decision-making capabilities.

-

Cloud Integration: Leveraging cloud technologies enhances scalability and reduces infrastructure costs. Cloud platforms provide tools for information storage, processing, and analytics that can be effortlessly scaled as volumes rise, making them ideal for entities in dynamic sectors like finance. The shift towards cloud-native architectures is expected to persist, with many organizations adopting hybrid models that combine both cloud and on-premises solutions.

-

Information Governance: Establishing clear information governance policies is vital for ensuring quality, security, and compliance. This includes defining roles and responsibilities for information management and implementing strong stewardship practices that align with industry regulations. The evolving landscape of information governance is shifting towards automated, AI-enforced frameworks, which can significantly enhance compliance and reduce manual oversight.

-

Performance Enhancement: Regular evaluations and improvements of the architecture are essential for sustaining high efficiency. Techniques such as indexing strategies, information partitioning, and caching mechanisms can significantly enhance retrieval times, which is critical for timely decision-making in financial services. Organizations are advised to avoid common pitfalls, such as over-engineering pipelines too early, which can lead to unnecessary complexity and hinder performance.

By following these best practices, entities can establish an information architecture that not only supports their existing business goals but also adapts to future challenges, ensuring resilience and effectiveness in management.

Implement Data Security and Compliance Measures

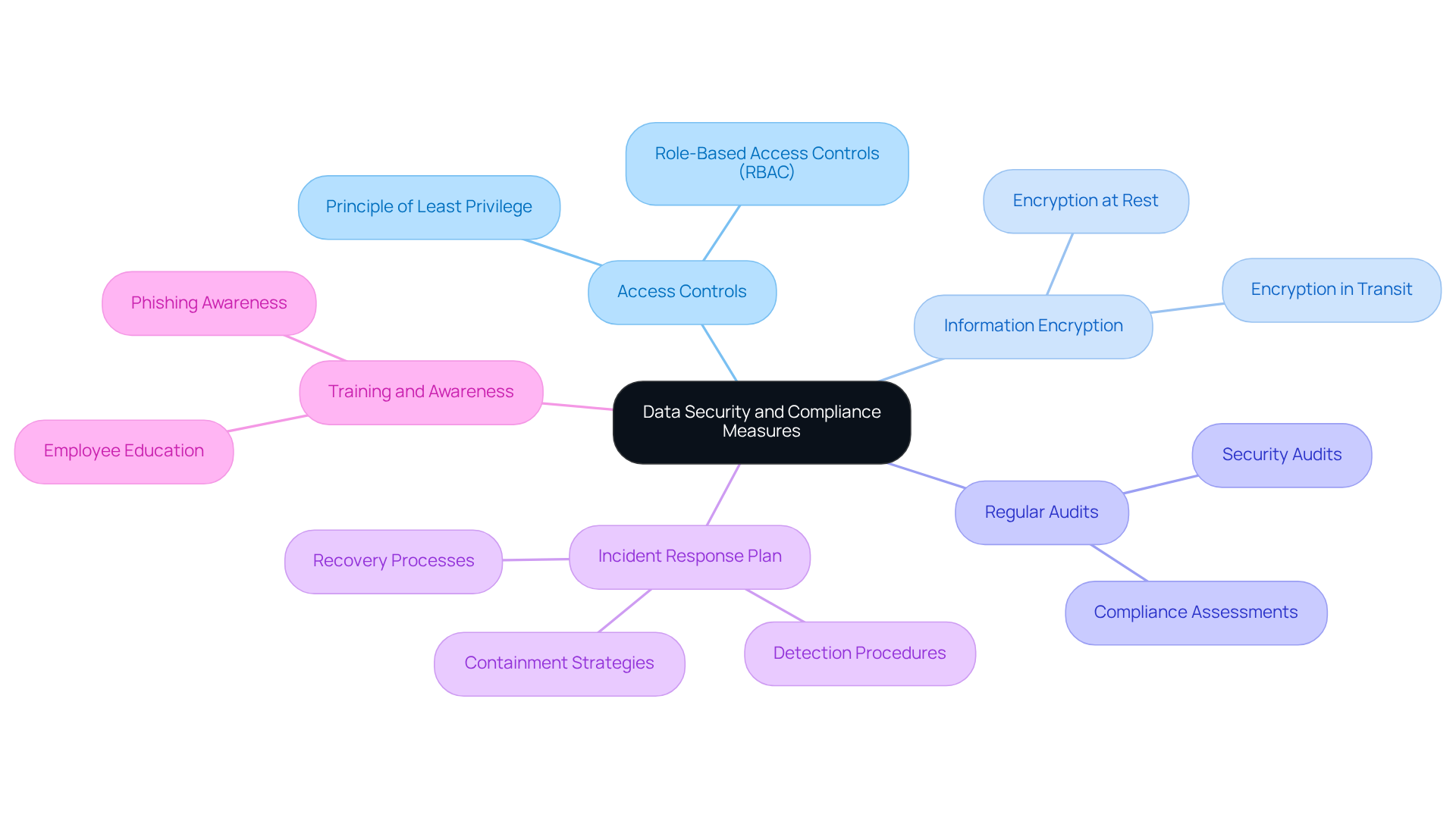

To effectively implement data security and compliance measures, organizations should adopt several best practices:

-

Access Controls: Organizations must establish strict access controls to limit data access to authorized personnel only. Implementing role-based access controls (RBAC) ensures that users possess only the minimum necessary permissions.

-

Information Encryption: It is essential to utilize encryption for information both at rest and in transit to protect sensitive details from unauthorized access. This practice is particularly crucial for safeguarding financial information and personal health details.

-

Regular Audits: Conducting regular security audits and compliance assessments is vital for identifying vulnerabilities and ensuring adherence to industry regulations such as GDPR, HIPAA, and PCI DSS.

-

Incident Response Plan: Organizations should create and uphold an incident response strategy to address potential breaches promptly. This plan must outline procedures for detection, containment, and recovery from security incidents.

-

Training and Awareness: Continuous education for employees on information security best practices and compliance requirements is necessary. This initiative helps cultivate a culture of security awareness within the organization.

By employing these measures, companies can significantly reduce the risk of breaches and ensure compliance with regulatory standards.

Optimize Data Storage and Retrieval Processes

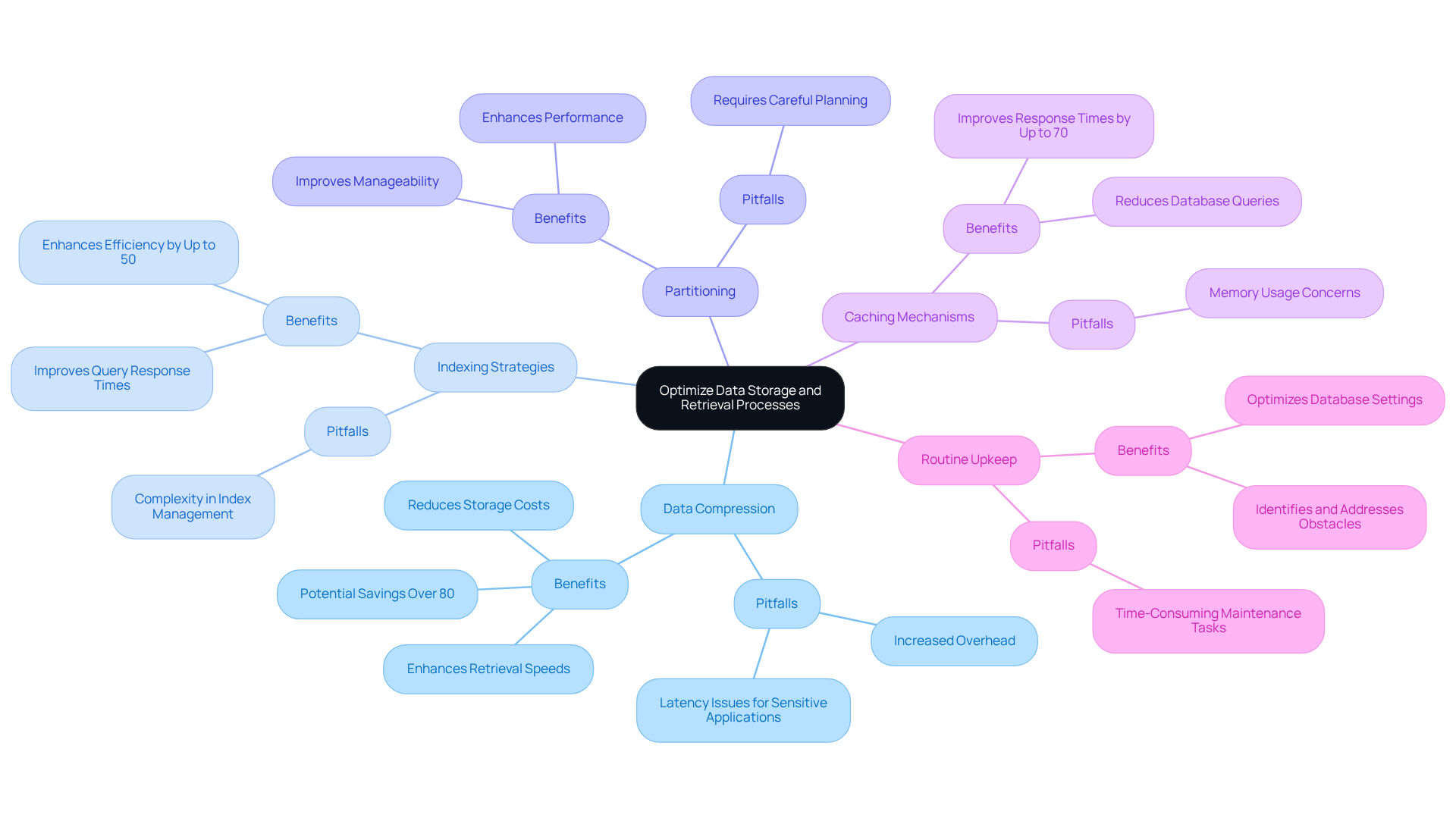

To optimize data storage and retrieval processes, organizations should adopt several key practices:

-

Data Compression: Implementing data compression techniques is essential for reducing storage costs and enhancing retrieval speeds. Compressed information occupies less space, facilitating quicker processing and improved efficiency. For instance, effective compression can yield storage savings exceeding 80%, significantly impacting overall management expenses. However, it is crucial to recognize that higher compression ratios may introduce increased overhead, potentially affecting efficiency, particularly for latency-sensitive applications.

-

Indexing Strategies: Employing indexing is vital for improving information retrieval efficiency. Well-structured indexes can substantially decrease query response times, especially in extensive datasets, where the right indexing approach can enhance efficiency metrics by up to 50%. This aligns with Geoffrey Moore’s assertion that information is critical for informed business decisions, as efficient information retrieval directly supports timely insights.

-

Partitioning: Utilizing partitioning enhances both performance and manageability. By dividing large tables into smaller, more manageable segments, organizations can facilitate more efficient information access, which is particularly beneficial in regulated industries where retrieval speed is paramount.

-

Caching Mechanisms: Implementing caching strategies allows frequently accessed information to be stored in memory, reducing the need for repeated database queries. This approach can lead to response time improvements of up to 70%, thereby enhancing user experience and operational efficiency.

-

Routine Upkeep: Conducting routine maintenance on storage systems is essential. This includes removing outdated information, optimizing database settings, and monitoring efficiency metrics to identify and address obstacles proactively. Marissa Mayer emphasizes that the sooner companies begin gathering and managing information, the quicker they can benefit from its insights.

By adhering to these optimization strategies, organizations can significantly enhance their storage and retrieval processes, leading to improved efficiency and greater user satisfaction. Additionally, it is important to be mindful of common pitfalls associated with these practices, such as the potential for increased complexity in managing highly compressed data and the necessity for careful planning in indexing to prevent performance degradation.

Conclusion

The significance of effective data platform engineering is paramount, serving as the backbone for organizations aiming to leverage the power of data. By clearly defining the role of a data platform engineer and underscoring the importance of collaboration, organizations can align their data initiatives with business objectives, ultimately delivering meaningful insights.

Key practices such as:

- Designing robust data architectures

- Implementing stringent security measures

- Optimizing storage and retrieval processes

are essential for success in this domain. Modular designs and effective information modeling enhance flexibility, while cloud integration and strong governance frameworks ensure compliance and security. Furthermore, optimizing data storage through techniques like compression and indexing can significantly boost efficiency and user satisfaction.

As organizations progress in their data-driven journeys, embracing these best practices becomes crucial. Investing in skilled data platform engineers and fostering a culture of collaboration and continuous improvement will not only enhance operational efficiency but also position organizations to adapt swiftly to future challenges and opportunities within the dynamic landscape of data engineering.

Frequently Asked Questions

What is the role of a Data Platform Engineer?

A Data Platform Engineer is responsible for designing, constructing, and maintaining the infrastructure that supports data processing, storage, and analysis.

What are the key responsibilities of a Data Platform Engineer?

Key responsibilities include data architecture design, constructing and enhancing information pipelines, collaborating with stakeholders, implementing monitoring solutions, and ensuring compliance with industry regulations.

Why is data architecture design important in this role?

Data architecture design is crucial as it creates scalable and efficient systems capable of managing large data volumes while ensuring data integrity and accessibility, which is essential for data-driven decision-making.

How does a Data Platform Engineer collaborate with stakeholders?

They work closely with data scientists, analysts, and business stakeholders to understand information needs and ensure the data platform aligns with organizational requirements.

What is the significance of monitoring solutions in data platform engineering?

Monitoring solutions are vital for tracking information quality and system performance, allowing engineers to make necessary adjustments to optimize operations and ensure reliable information delivery.

Why is data platform engineering critical in regulated sectors like finance and healthcare?

It is essential for adherence to industry regulations and security standards, ensuring that information handling practices meet stringent requirements in these sectors.

What is the expected salary trend for Platform Engineers in financial services and healthcare by 2026?

Entry-level salaries are expected to begin above $95,000, while senior positions may surpass $300,000, reflecting the growing demand for skilled professionals in these fields.

How can organizations improve their recruitment and retention strategies for Data Platform Engineers?

By clearly outlining the responsibilities of Data Platform Engineers, organizations can attract and retain skilled engineers who are well-prepared to advance information initiatives.