Introduction

As organizations increasingly rely on data to drive decision-making, the methods used to manage this information are undergoing a significant transformation. This article examines the comparative landscape of traditional data engineering techniques, which have dominated for decades, against the backdrop of modern innovations that promise greater flexibility and efficiency. With the rise of real-time processing and AI integration, a pressing question arises: can traditional methods keep pace with the demands of a rapidly evolving data landscape, or are they destined to become obsolete?

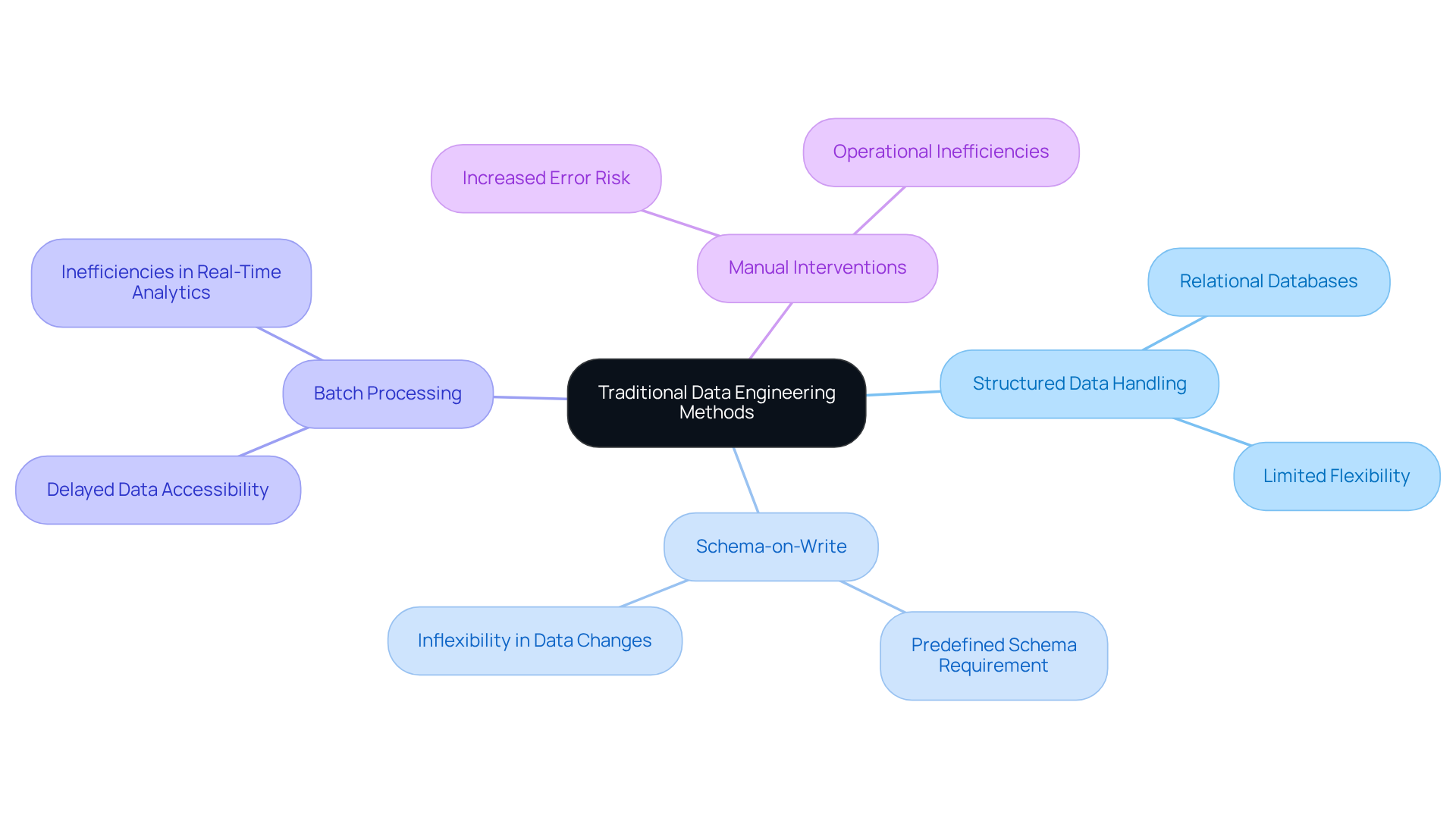

Overview of Traditional Data Engineering Methods

Conventional engineering approaches primarily revolve around the Extract, Transform, Load (ETL) process. This method involves retrieving information from multiple sources, converting it into an appropriate format, and loading it into a repository for analysis. Key characteristics of this approach include:

- Structured Data Handling: Traditional methods excel in managing structured data, often relying on relational databases.

- Schema-on-Write: Data must conform to a predefined schema before being loaded, which can limit flexibility.

- Batch Processing: Information is processed in groups, resulting in delays in accessibility for immediate analytics.

- Manual Interventions: Many processes require manual oversight, increasing the risk of errors and inefficiencies.

While these methods have benefited industries for decades, they are increasingly viewed as and contemporary information requirements. This is particularly true in fields such as finance and healthcare, where real-time insights are essential. By 2026, over 90 percent of mid-to-large organizations are anticipated to employ cloud repositories, reflecting a significant shift towards more agile information handling practices. Specialists emphasize that the evolution of information processing necessitates a transition from conventional ETL frameworks to align with the data engineering future, adapting to the rapid pace of change in requirements and technology. Furthermore, 77 percent of organizations assess their information quality as average or poorer, underscoring the challenges faced in conventional information management. Additionally, 55 percent of AI-driven applications require near-real-time information ingestion, highlighting the urgency for modern information solutions in regulated sectors. As noted by Shamnad Mohamed Shaffi, zero-ETL represents a transformative approach that eliminates the complexities associated with traditional ETL processes.

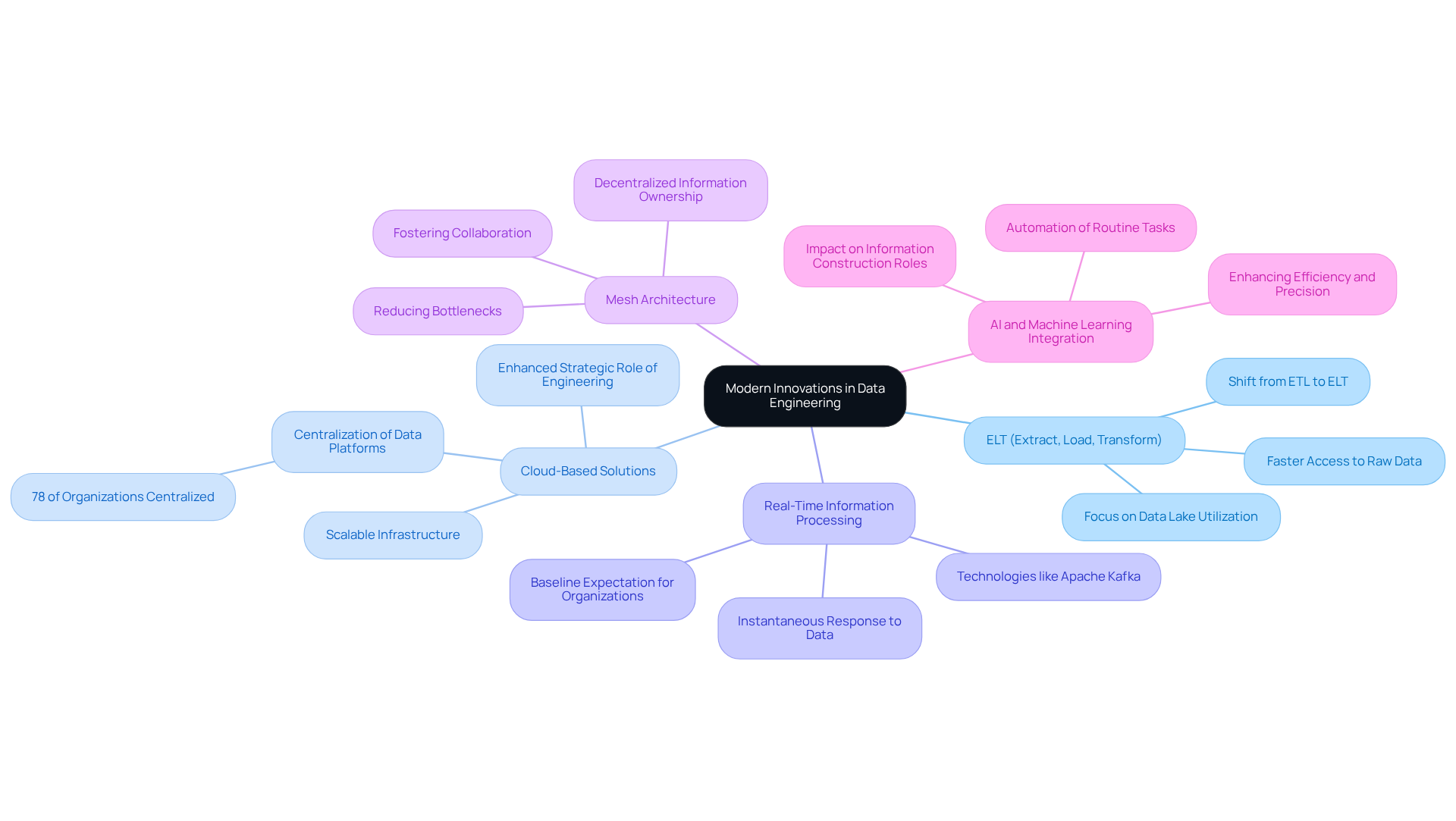

Exploration of Modern Innovations in Data Engineering

Contemporary advancements in information engineering underscore the importance of flexibility, scalability, and real-time processing, fundamentally transforming how organizations manage and utilize information. Key advancements include:

- ELT (Extract, Load, Transform): This method enables data to be loaded into a data lake prior to transformation, facilitating quicker access to raw data for analytics. By 2026, the adoption of ELT is projected to surpass that of traditional ETL processes, as organizations recognize the efficiency gains from accessing raw information. Notably, the field of information science is anticipated to shift its focus away from micro-level quality management, reflecting the evolving practices in information development.

- Cloud-Based Solutions: Platforms such as AWS, Google Cloud, and Azure offer scalable infrastructure capable of dynamically supporting varying information workloads. Approximately 78% of organizations have centralized their information platforms, thereby enhancing the strategic role of engineering within enterprises.

- Real-Time Information Processing: Technologies like Apache Kafka and Apache Flink facilitate continuous stream processing, enabling organizations to respond to incoming information instantaneously. This is becoming a baseline expectation, with companies leveraging these tools to secure competitive advantages.

- Mesh Architecture: This decentralized approach encourages domain-oriented ownership of information, fostering collaboration and reducing bottlenecks in access and processing.

- AI and Machine Learning Integration: The integration of AI into information processing workflows automates routine tasks, thereby enhancing efficiency and precision. As generative AI continues to evolve, it is expected to become a fundamental layer in information processing, optimizing workflows and improving quality. Furthermore, positions related to information construction are being impacted more significantly by AI than other technology roles, indicating a profound transformation within the field.

These innovations effectively address the limitations of traditional approaches, rendering information management more adaptable to the needs of enterprises operating in dynamic environments.

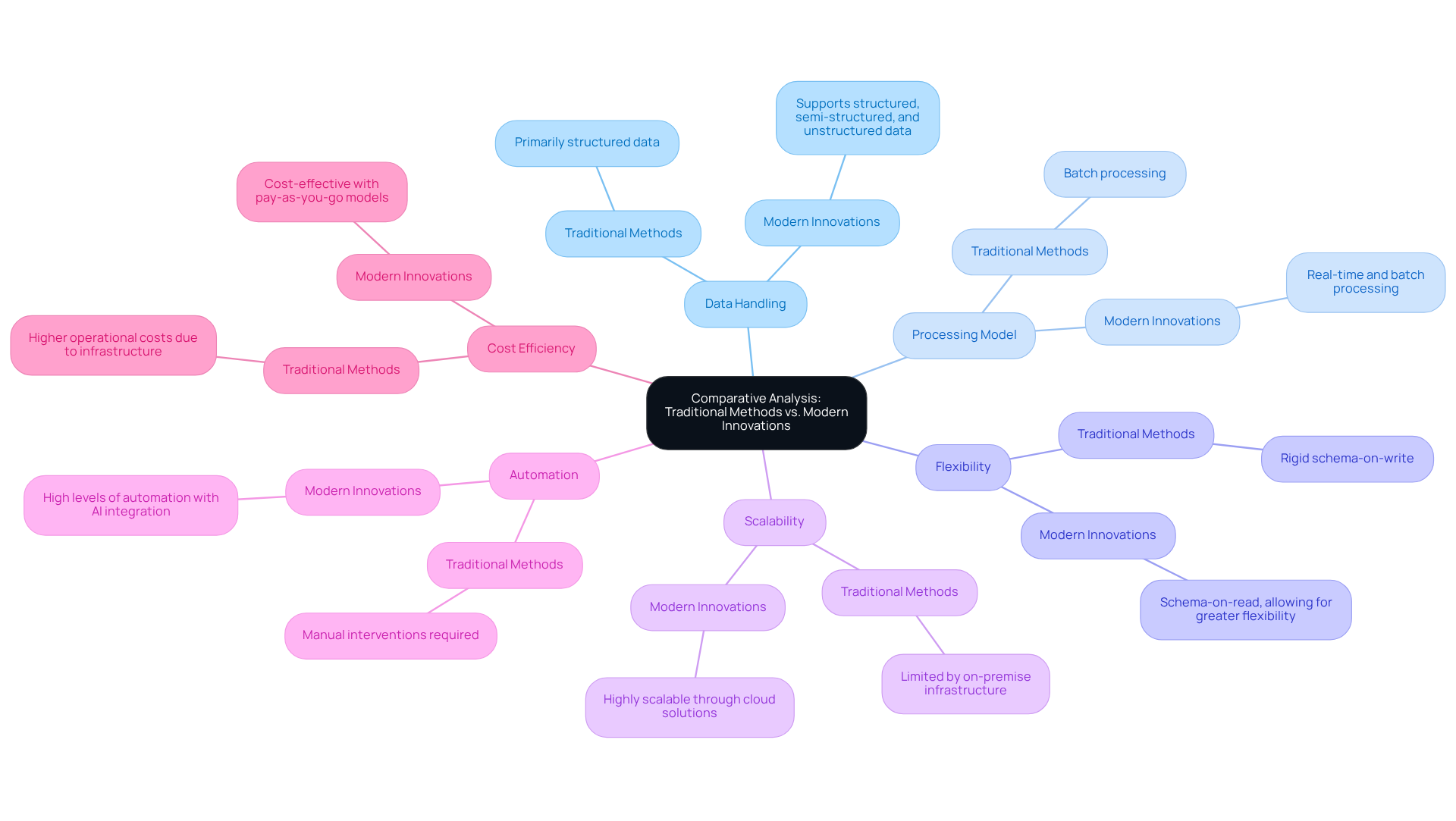

Comparative Analysis: Traditional Methods vs. Modern Innovations

Use english for answers

Please return corrected/formatted text for:

- Criteria

- Data Handling

- Traditional Methods: Primarily structured data

- Modern Innovations: Supports structured, semi-structured, and unstructured data

- Processing Model

- Traditional Methods: Batch processing

- Modern Innovations: Real-time and batch processing

- Flexibility

- Traditional Methods: Rigid schema-on-write

- Modern Innovations: Schema-on-read, allowing for greater flexibility

- Scalability

- Traditional Methods: Limited by on-premise infrastructure

- Modern Innovations: Highly scalable through cloud solutions

- Automation

- Traditional Methods: Manual interventions required

- Modern Innovations: High levels of automation with AI integration

- Cost Efficiency

- Traditional Methods: Higher operational costs due to infrastructure

- Modern Innovations: Cost-effective with pay-as-you-go models

- Data Handling

This analysis indicates that while traditional methods possess certain advantages, in flexibility, scalability, and efficiency. These attributes render modern approaches more suitable for contemporary data-driven organizations.

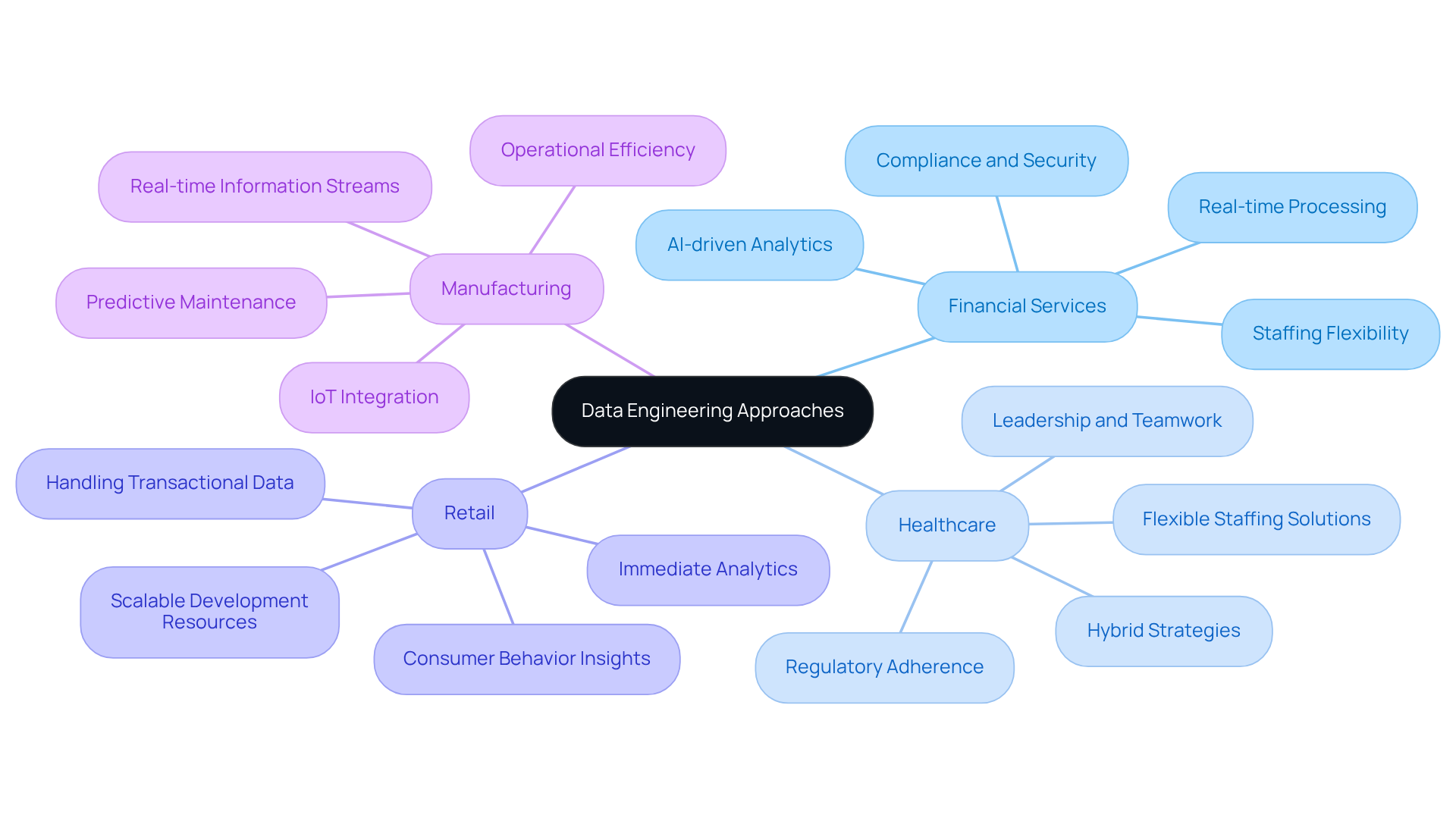

Choosing the Right Approach: Suitability for Different Industries

When selecting a data engineering approach, organizations must consider their specific industry requirements:

Financial Services: This sector demands stringent compliance and security measures due to the sensitive nature of financial data. Innovations such as real-time processing and are critical for effective risk management and fraud detection. Neutech’s focus on hiring developers with strong work ethic and communication skills ensures that teams can navigate these complexities effectively. Additionally, our month-to-month staffing flexibility allows financial institutions to scale resources as needed.

Healthcare: Managing sensitive patient information necessitates adherence to strict regulations. A hybrid strategy that merges conventional and contemporary techniques can protect information integrity while enabling prompt insights. Neutech’s commitment to leadership and teamwork among its engineers fosters a collaborative environment crucial for meeting these regulatory demands. Furthermore, our flexible staffing solutions enable healthcare providers to adjust their teams based on fluctuating needs.

Retail: This industry flourishes on immediate analytics to enhance customer experiences. Contemporary information processing techniques are especially efficient for handling significant amounts of transactional information swiftly, allowing retailers to react to consumer behavior and preferences instantly. Neutech’s ability to provide specialized engineering talent on a month-to-month basis allows retailers to scale their development resources efficiently.

Manufacturing: The incorporation of IoT information for predictive maintenance is vital in this sector. Contemporary methods that support real-time information streams are essential for operational efficiency, enabling manufacturers to foresee equipment failures and enhance production processes. Neutech’s flexible staffing model helps manufacturers adapt to changing technological landscapes with ease.

By understanding the unique needs of their industry and leveraging Neutech’s specialized engineering talent, organizations can make informed decisions about which data engineering future methods to adopt, ensuring they remain competitive and compliant.

Conclusion

The landscape of data engineering is evolving rapidly, necessitating a shift from traditional methods to modern innovations that better meet the demands of today’s data-driven environments. While conventional approaches like ETL have served organizations well for years, their limitations in flexibility, scalability, and real-time processing highlight the urgent need for more adaptive solutions. Embracing modern methodologies allows organizations to harness the full potential of their data, ensuring they remain competitive in an increasingly complex market.

Key insights from the comparative analysis reveal that modern innovations, such as ELT, cloud-based solutions, and real-time processing, significantly enhance data management capabilities. These advancements not only improve efficiency but also facilitate better decision-making across various industries, from finance to healthcare. Moreover, the integration of AI and machine learning into data workflows automates routine tasks, further streamlining operations and reducing the risk of human error.

As organizations navigate their data engineering futures, it is crucial to recognize the specific needs of their sectors and choose the appropriate methodologies accordingly. By leveraging the strengths of modern data engineering techniques, businesses can enhance their operational efficiency, ensure compliance with industry regulations, and ultimately drive innovation. The transition to contemporary data practices is not merely advantageous; it is essential for thriving in an era defined by rapid technological change and increasing data complexity.

Frequently Asked Questions

What is the traditional data engineering method primarily based on?

The traditional data engineering method is primarily based on the Extract, Transform, Load (ETL) process, which involves retrieving information from multiple sources, converting it into an appropriate format, and loading it into a repository for analysis.

What are the key characteristics of traditional data engineering methods?

Key characteristics include structured data handling, schema-on-write requirements, batch processing, and the need for manual interventions.

How do traditional methods handle structured data?

Traditional methods excel in managing structured data and often rely on relational databases for this purpose.

What does schema-on-write mean in the context of traditional data engineering?

Schema-on-write means that data must conform to a predefined schema before being loaded into the system, which can limit flexibility.

What is the impact of batch processing in traditional data engineering?

Batch processing involves processing information in groups, which can lead to delays in accessibility for immediate analytics.

Why are manual interventions a concern in traditional data engineering methods?

Manual interventions increase the risk of errors and inefficiencies in the data processing workflow.

Why are traditional data engineering methods considered insufficient for modern needs?

They are viewed as insufficient because they struggle to meet the demands for real-time insights, particularly in industries like finance and healthcare.

What is the anticipated trend for organizations regarding cloud repositories by 2026?

By 2026, over 90 percent of mid-to-large organizations are expected to employ cloud repositories, indicating a shift towards more agile information handling practices.

What challenges do organizations face with conventional information management?

77 percent of organizations assess their information quality as average or poorer, highlighting challenges in conventional information management.

What is zero-ETL, and why is it significant?

Zero-ETL is a transformative approach that eliminates the complexities associated with traditional ETL processes, making it significant for adapting to the evolving needs of data engineering.

List of Sources

- Overview of Traditional Data Engineering Methods

- Industry News 2025 The Zero ETL Paradigm Transforming Enterprise Data Integration in Real Time (https://isaca.org/resources/news-and-trends/industry-news/2025/the-zero-etl-paradigm-transforming-enterprise-data-integration-in-real-time)

- How AI is Transforming Data Engineering (https://databricks.com/blog/how-ai-transforming-data-engineering)

- Data Engineering Stats 2026: Latest Market Insights & Trends (https://data.folio3.com/blog/data-engineering-stats)

- How the last 6 months of AI rewrote the rules of data engineering (https://datapro.news/p/how-last-6-months-of-ai-rewrote-the-rules-of-data-engineering)

- ETL to dbt: Evolution of Data Engineering | Sumit Gupta posted on the topic | LinkedIn (https://linkedin.com/posts/sumonigupta_for-years-etl-was-the-standard-extract-activity-7432401162567335936-ND2p)

- Exploration of Modern Innovations in Data Engineering

- Data Engineering Trends in 2026: Key Innovations & Future Insights (https://softwebsolutions.com/resources/data-engineering-trends)

- Data Engineering in 2026: What Changes? (https://gradientflow.substack.com/p/data-engineering-for-machine-users)

- Refonte Learning : Data Engineering in 2026: Trends, Tools, and How to Thrive (https://refontelearning.com/blog/data-engineering-in-2026-trends-tools-and-how-to-thrive)

- Data Engineering in 2026: 12 Predictions (https://datafold.com/blog/data-engineering-in-2026-predictions)

- From ETL to Autonomy: Data Engineering in 2026 (https://thenewstack.io/from-etl-to-autonomy-data-engineering-in-2026)

- Comparative Analysis: Traditional Methods vs. Modern Innovations

- Modern Data Platform Vs. Traditional Data Platform: Where To Invest Your Time And Money? (https://affine.medium.com/modern-data-platform-vs-traditional-data-platform-where-to-invest-your-time-and-money-6c4ddb5543a9)

- Modern Data Stack 2026: Building the Foundation for AI Success (https://alation.com/blog/modern-data-stack-explained)

- The battle of old and new: Traditional vs modern data platforms (https://visium.com/articles/the-battle-of-old-and-new-traditional-vs-modern-data-platforms)

- Refonte Learning : Data Engineering in 2026: Trends, Tools, and How to Thrive (https://refontelearning.com/blog/data-engineering-in-2026-trends-tools-and-how-to-thrive)

- Choosing the Right Approach: Suitability for Different Industries

- Major Healthcare Data Security Trends in 2025 – Bluesight (https://bluesight.com/news/major-healthcare-data-security-trends-in-2025)

- How Advanced Data Analytics Drives Decision-Making in Financial Services (https://biztechmagazine.com/article/2025/08/how-advanced-data-analytics-drives-decision-making-financial-services)

- How Financial Firms Are Transforming Data Management (https://dtcc.com/dtcc-connection/articles/2025/september/30/how-financial-firms-are-transforming-data-management)

- Providers Evaluate Security as Updated HIPAA Compliance Looms (https://healthtechmagazine.net/article/2026/01/providers-evaluate-security-updated-hipaa-compliance-looms)